【Python数据分析案例】(四十四)——基于EEMD-LSTM的石油价格预测

网盘截屏

案例背景

很久没更新时间序列预测有关的东西了。

之前写了很多CNN-LSTM,GRU-attention,这种神经网络之内的不同模型的缝合,现在写一个模态分解算法和神经网络的缝合。

虽然eemd-lstm已经在学术界被做烂了,但是还是很多新手小白或者是跨专业的同学不知道怎么做这种缝合预测。

本次案例就简单浅浅缝合一下吧。正好有一些石油期货的数据,做点分析,画点图可视化,然后进行预测对比。

数据介绍

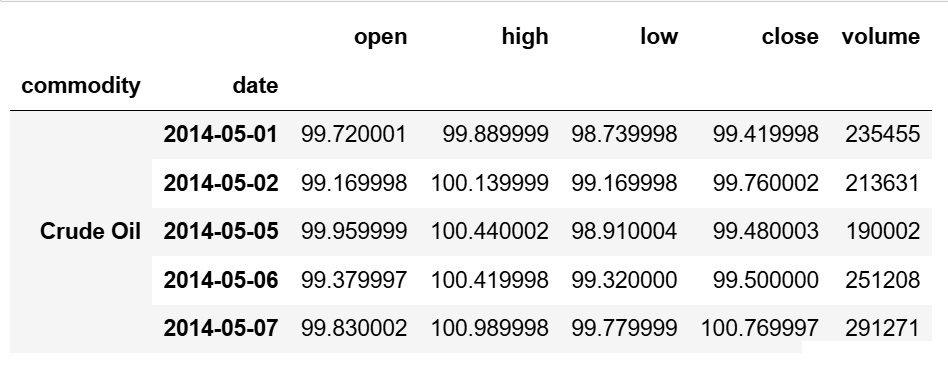

一个csv文件,这个样子,很典型的交易数据,第一行原油代码,第二列名称,开盘价最高价最低价收盘价。

别的什么股票期货债券基金指数的数据基本都是这样的:

下面我们要对不同原油价格,做一些收益率,波动率,相关性的可视化分析,然后再使用神经网络进行预测。

需要本次演示案例的数据和全部代码文件的同学可以参考:原油数据

代码实现

还是一样,数据分析四件套先导入

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

sns.set_style('whitegrid')

plt.style.use("fivethirtyeight")

from datetime import datetime

读取数据

展示前五行

df=pd.read_csv('oilprices.csv',parse_dates=['date'])

df.head()

查看一下每个原油的数据量多少

df['ticker'].value_counts()

名称也是一样的,看对不对得上

df['commodity'].value_counts()

和上面的数量是一样的,但是有个问题,我们后面要分析的话,时间上要是一致的,不能一个类别多一个类别少,就统一一下,用数量最少得crude oil的时间范围取到全部品种上。

df[df['commodity']=='Crude Oil']['date'].min(),df[df['commodity']=='Crude Oil']['date'].max()

那就取2014-05-01 到 2024-05-03 的数据

start_time='2014-05-01' ; end_time='2024-05-03' df=df[(df['date']<=end_time)&(df['date']>=start_time)].iloc[:,1:].set_index(['commodity','date']) df.head()

多层索引,第一层是不同的原油,第二层是不同的时间,一目了然。

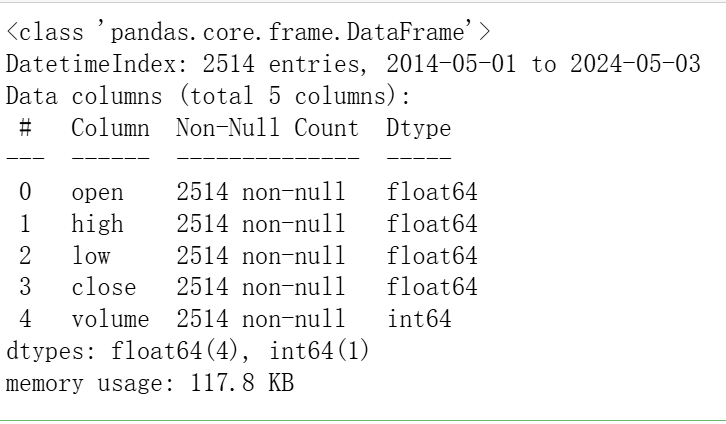

描述性统计

我们只选一个石油来看看

#Brent Crude Oil df.loc['Brent Crude Oil'].describe()

df.loc['Brent Crude Oil'].info()

收盘价分析

company_list=df.index.get_level_values(0).unique() plt.figure(figsize=(18, 10),dpi=128) plt.subplots_adjust(top=1.25, bottom=1.2)

for i, company in enumerate(company_list):

plt.subplot(2, 3, i+1)

df.loc[company]['close'].plot()

plt.ylabel('close')

plt.xlabel(None)

plt.title(f"Closing Price of {company}")

plt.tight_layout() plt.show()

交易量可视化

plt.figure(figsize=(18, 10),dpi=128) plt.subplots_adjust(top=1.25, bottom=1.2)

for i, company in enumerate(company_list, 1):

plt.subplot(2, 3, i)

df.loc[company]['volume'].plot()

plt.ylabel('volume')

plt.xlabel(None)

plt.title(f"Sales Volume for {company}")

plt.tight_layout() plt.show()

移动平均线

ma_day = [10, 20, 50]

ma_df=[]

for company in company_list:

company_df=df.loc[company]

for ma in ma_day:

column_name = f"MA for {ma} days"

company_df[column_name] =company_df['close'].rolling(ma).mean()

ma_df.append(company_df)

fig, axes = plt.subplots(nrows=2, ncols=3,dpi=128) fig.set_figheight(10) fig.set_figwidth(18)

ma_df[0][['close', 'MA for 10 days', 'MA for 20 days', 'MA for 50 days']].plot(ax=axes[0,0]) axes[0,0].set_title(company_list[0])

ma_df[1][['close', 'MA for 10 days', 'MA for 20 days', 'MA for 50 days']].plot(ax=axes[0,1]) axes[0,1].set_title(company_list[1])

ma_df[2][['close', 'MA for 10 days', 'MA for 20 days', 'MA for 50 days']].plot(ax=axes[0,2]) axes[0,2].set_title(company_list[2])

ma_df[3][['close', 'MA for 10 days', 'MA for 20 days', 'MA for 50 days']].plot(ax=axes[1,0]) axes[1,0].set_title(company_list[3])

ma_df[4][['close', 'MA for 10 days', 'MA for 20 days', 'MA for 50 days']].plot(ax=axes[1,1]) axes[1,1].set_title(company_list[4])

fig.tight_layout() plt.show()

日回报率

for company in ma_df: company['Daily Return'] = company['close'].pct_change()

# Then we'll plot the daily return percentage fig, axes = plt.subplots(nrows=2, ncols=3,dpi=128) fig.set_figheight(10) fig.set_figwidth(18)

ma_df[0]['Daily Return'].plot(ax=axes[0,0], legend=True, linestyle='--', marker='o') axes[0,0].set_title(company_list[0])

ma_df[1]['Daily Return'].plot(ax=axes[0,1], legend=True, linestyle='--', marker='o') axes[0,1].set_title(company_list[1])

ma_df[2]['Daily Return'].plot(ax=axes[0,2], legend=True, linestyle='--', marker='o') axes[0,2].set_title(company_list[2])

ma_df[3]['Daily Return'].plot(ax=axes[1,0], legend=True, linestyle='--', marker='o') axes[1,0].set_title(company_list[3])

ma_df[4]['Daily Return'].plot(ax=axes[1,1], legend=True, linestyle='--', marker='o') axes[1,1].set_title(company_list[4])

fig.tight_layout() plt.show()

直方图

plt.figure(figsize=(15, 10),dpi=128)

for i, company in enumerate(ma_df, 1):

plt.subplot(2, 3, i)

company['Daily Return'].hist(bins=50)

plt.xlabel('Daily Return')

plt.ylabel('Counts')

plt.title(f'{company_list[i - 1]}')

plt.tight_layout()

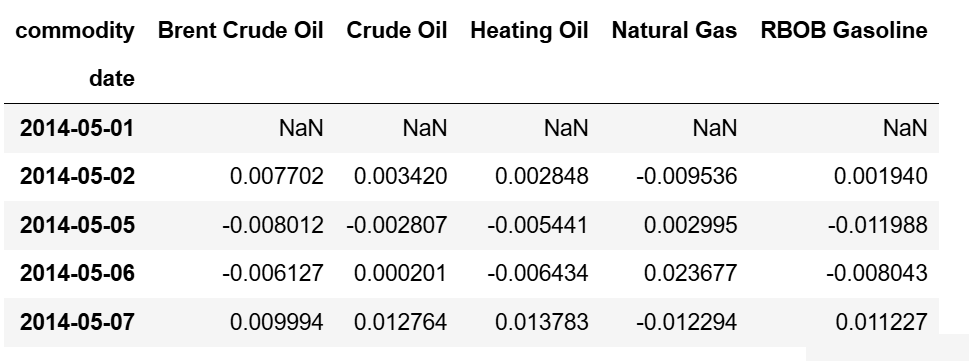

收益性的相关性

tech_rets=df['close'].unstack().T.pct_change() tech_rets.head()

相关性图

sns.jointplot(x='Brent Crude Oil', y='Crude Oil', data=tech_rets, kind='scatter')

对应的不同原油价格之间的散点和回归曲线

sns.pairplot(tech_rets, kind='reg')

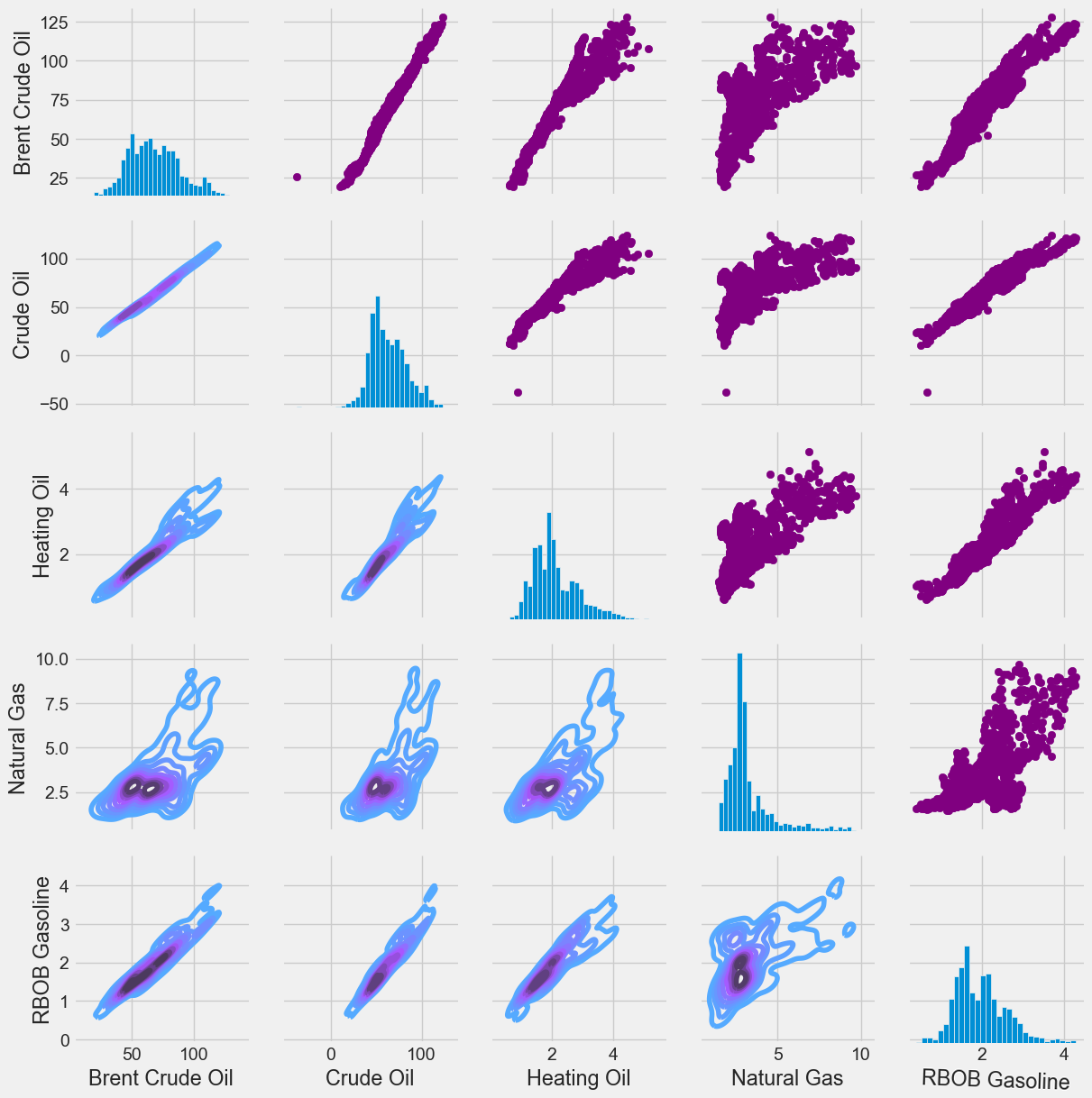

sns.PairGrid()收益率

他们之间的收益率的关系

return_fig = sns.PairGrid(tech_rets.dropna())

# Using map_upper we can specify what the upper triangle will look like. return_fig.map_upper(plt.scatter, color='purple')

# We can also define the lower triangle in the figure, inclufing the plot type (kde) # or the color map (BluePurple) return_fig.map_lower(sns.kdeplot, cmap='cool_d')

# Finally we'll define the diagonal as a series of histogram plots of the daily return return_fig.map_diag(plt.hist, bins=30)

sns.PairGrid() 收盘价

returns_fig = sns.PairGrid(df['close'].unstack().T)

# Using map_upper we can specify what the upper triangle will look like. returns_fig.map_upper(plt.scatter,color='purple')

# We can also define the lower triangle in the figure, inclufing the plot type (kde) or the color map (BluePurple) returns_fig.map_lower(sns.kdeplot,cmap='cool_d')

# Finally we'll define the diagonal as a series of histogram plots of the daily return returns_fig.map_diag(plt.hist,bins=30)

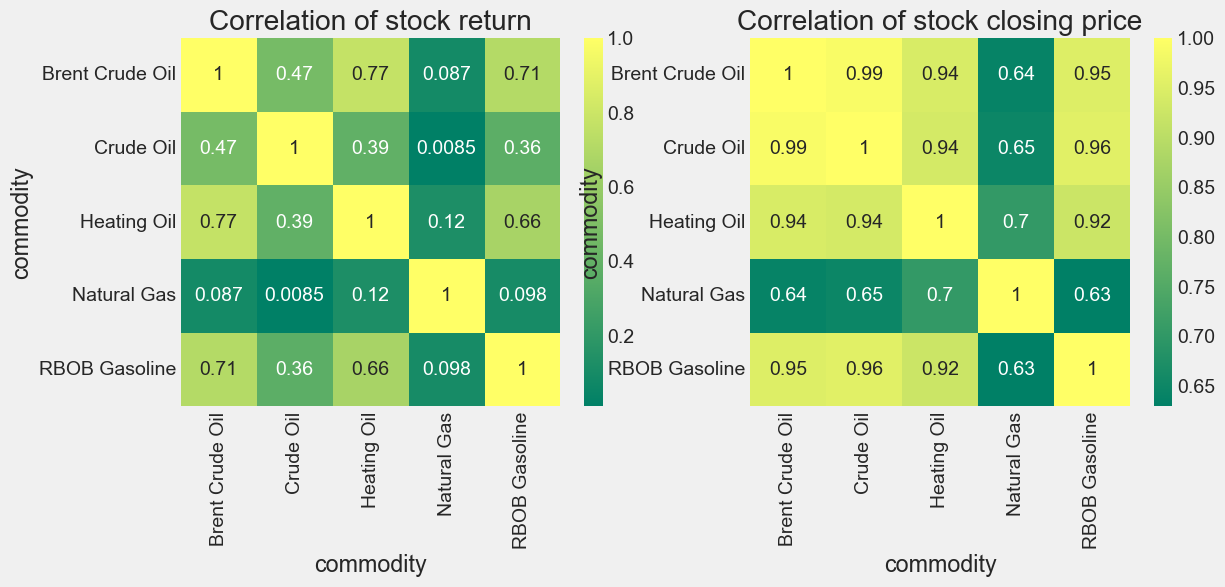

相关系数热力图

plt.figure(figsize=(12, 10))

plt.subplot(2, 2, 1)

sns.heatmap(tech_rets.corr(), annot=True, cmap='summer')

plt.title('Correlation of stock return')

plt.subplot(2, 2, 2)

sns.heatmap(df['close'].unstack().T.corr(), annot=True, cmap='summer')

plt.title('Correlation of stock closing price')

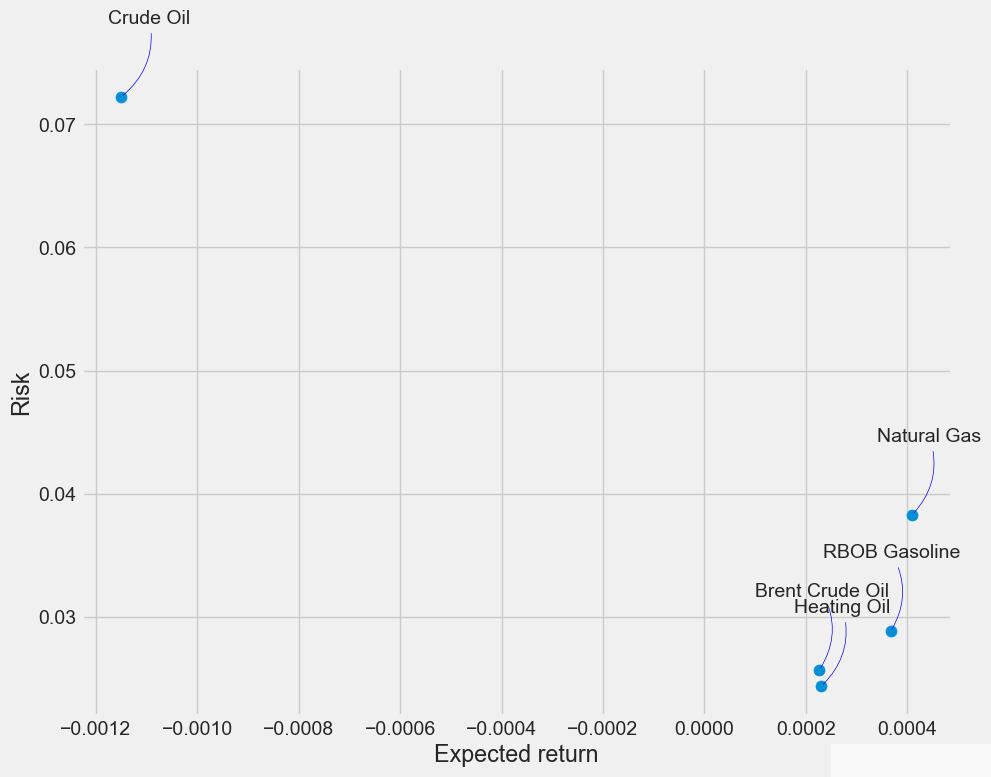

承担的风险

rets = tech_rets.dropna()

area = np.pi * 20

plt.figure(figsize=(10, 8))

plt.scatter(rets.mean(), rets.std(), s=area)

plt.xlabel('Expected return')

plt.ylabel('Risk')

for label, x, y in zip(rets.columns, rets.mean(), rets.std()): plt.annotate(label, xy=(x, y), xytext=(50, 50), textcoords='offset points', ha='right', va='bottom', arrowprops=dict(arrowstyle='-', color='blue', connectionstyle='arc3,rad=-0.3'))

这个就是经典的资本资产定价模型了,收益率和风险的关系。

上面的图我都没写太多的风险,因为都还是很浅显易懂的。预测才是我们的重点!下面开始预测。

预测 Brent Crude Oil

下面,我们选取预测 Brent Crude Oil这一个原油价格,其他的也是一样的,换成别的时间序列数据也是一样的。

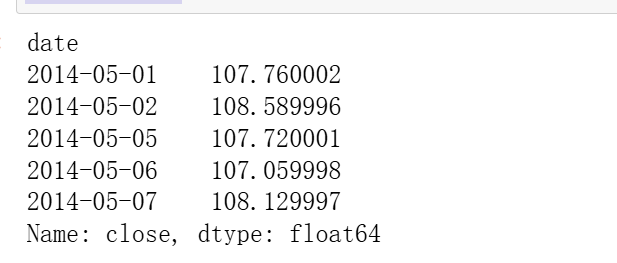

获取数据:

df_Brent=df.loc['Brent Crude Oil'] df_Brent

对应的收盘价图

plt.figure(figsize=(16,6))

plt.title('Close Price History')

plt.plot(df_Brent['close'])

plt.xlabel('Date', fontsize=18)

plt.ylabel('Close Price', fontsize=18)

plt.show()

LSTM预测

我们先采用LSTM进行预测

import os import math import time import random as rn

from sklearn.model_selection import train_test_split from sklearn.preprocessing import MinMaxScaler,StandardScaler from sklearn.metrics import mean_absolute_error from sklearn.metrics import mean_squared_error,r2_score

import tensorflow as tf import keras from keras.layers import Layer import keras.backend as K from keras.models import Model, Sequential from keras.layers import GRU, Dense,Conv1D, MaxPooling1D,GlobalMaxPooling1D,Embedding,Dropout,Flatten,SimpleRNN,LSTM from keras.callbacks import EarlyStopping #from tensorflow.keras import regularizers #from keras.utils.np_utils import to_categorical from tensorflow.keras import optimizers

取出收盘价

data0=df_Brent['close'] data0.head()

自定义函数构建训练集和测试集、

def build_sequences(text, window_size=24): #text:list of capacity x, y = [],[] for i in range(len(text) - window_size): sequence = text[i:i+window_size] target = text[i+window_size] x.append(sequence) y.append(target) return np.array(x), np.array(y)

def get_traintest(data,train_ratio=0.8,window_size=24): train_size=int(len(data0)*train_ratio) train=data[:train_size] test=data[train_size-window_size:] X_train,y_train=build_sequences(train,window_size=window_size) X_test,y_test=build_sequences(test,window_size=window_size) return X_train,y_train,X_test,y_test

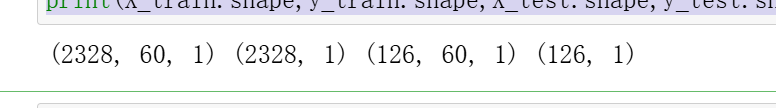

划分测试集和训练集

train_ratio=0.95 #训练集比例 window_size=60 #滑动窗口大小,即循环神经网络的时间步长 X_train,y_train,X_test,y_test=get_traintest(np.array(data0).reshape(-1,),window_size=window_size,train_ratio=train_ratio) print(X_train.shape,y_train.shape,X_test.shape,y_test.shape)

数据归一化处理

#归一化 scaler = MinMaxScaler() scaler = scaler.fit(X_train) X_train=scaler.transform(X_train) X_test=scaler.transform(X_test)

y_train_orage=y_train.copy() y_scaler = MinMaxScaler() y_scaler = y_scaler.fit(y_train.reshape(-1,1)) y_train=y_scaler.transform(y_train.reshape(-1,1))

查看形状

X_train=X_train.reshape(X_train.shape[0],X_train.shape[1],1) X_test=X_test.reshape(X_test.shape[0],X_test.shape[1],1) y_test=y_test.reshape(-1,1) ; test_size=y_test.shape[0] print(X_train.shape,y_train.shape,X_test.shape,y_test.shape)

可视化

plt.figure(figsize=(10,5),dpi=256)

plt.plot(data0.index[:-test_size],data0.iloc[:-test_size],label='Train',color='#FA9905')

plt.plot(data0.index[-test_size:],data0.iloc[-(test_size):],label='Test',color='#FB8498',linestyle='dashed')

plt.legend()

plt.ylabel('Predict Series',fontsize=16)

plt.xlabel('Time',fontsize=16)

plt.show()

自定义函数固定随机数种子和自定义评价函数

def set_my_seed(): os.environ['PYTHONHASHSEED'] = '0' np.random.seed(1) rn.seed(12345) tf.random.set_seed(123)

def evaluation(y_test, y_predict): mae = mean_absolute_error(y_test, y_predict) mse = mean_squared_error(y_test, y_predict) rmse = np.sqrt(mean_squared_error(y_test, y_predict)) mape=(abs(y_predict -y_test)/ y_test).mean() #r_2=r2_score(y_test, y_predict) return mse, rmse, mae, mape

构建模型

def build_model(X_train,mode='LSTM',hidden_dim=[32,16]): set_my_seed() model = Sequential() if mode=='MLP': model.add(Dense(hidden_dim[0],activation='relu',input_shape=(X_train.shape[-2],X_train.shape[-1]))) model.add(Flatten()) model.add(Dense(hidden_dim[1],activation='relu')) elif mode=='LSTM': # LSTM model.add(LSTM(hidden_dim[0],return_sequences=True, input_shape=(X_train.shape[-2],X_train.shape[-1]))) model.add(LSTM(hidden_dim[1])) model.add(Dense(1)) model.compile(optimizer='Adam', loss='mse',metrics=[tf.keras.metrics.RootMeanSquaredError(),"mape","mae"]) return model

自定义一些可视化的函数

def plot_loss(hist,imfname=''):

plt.subplots(1,4,figsize=(16,4))

for i,key in enumerate(hist.history.keys()):

n=int(str('14')+str(i+1))

plt.subplot(n)

plt.plot(hist.history[key], 'k', label=f'Training {key}')

plt.title(f'{imfname} Training {key}')

plt.xlabel('Epochs')

plt.ylabel(key)

plt.legend()

plt.tight_layout()

plt.show()

def plot_fit(y_test, y_pred):

plt.figure(figsize=(4,2))

plt.plot(y_test, color="red", label="actual")

plt.plot(y_pred, color="blue", label="predict")

plt.title(f"predict vs. actual")

plt.xlabel("Time")

plt.ylabel('power')

plt.legend()

plt.show()

自定义训练函数

df_eval_all=pd.DataFrame(columns=['MSE','RMSE','MAE','MAPE']) df_preds_all=pd.DataFrame() def train_fuc(mode='LSTM',batch_size=32,epochs=50,hidden_dim=[32,16],verbose=0,show_loss=True,show_fit=True): #构建模型 s = time.time() set_my_seed() model=build_model(X_train=X_train,mode=mode,hidden_dim=hidden_dim) earlystop = EarlyStopping(monitor='loss', min_delta=0, patience=5) hist=model.fit(X_train, y_train,batch_size=batch_size,epochs=epochs,callbacks=[earlystop],verbose=verbose) if show_loss: plot_loss(hist)

#预测

y_pred = model.predict(X_test)

y_pred = y_scaler.inverse_transform(y_pred)

#print(f'真实y的形状:{y_test.shape},预测y的形状:{y_pred.shape}')

if show_fit:

plot_fit(y_test, y_pred)

e=time.time()

print(f"运行时间为{round(e-s,3)}")

df_preds_all[mode]=y_pred.reshape(-1,)

s=list(evaluation(y_test, y_pred))

df_eval_all.loc[f'{mode}',:]=s

s=[round(i,3) for i in s]

print(f'{mode}的预测效果为:MSE:{s[0]},RMSE:{s[1]},MAE:{s[2]},MAPE:{s[3]}')

print("=======================================运行结束==========================================")

return s[0]

初始化参数

window_size=60 batch_size=32 epochs=50 hidden_dim=[32,16]

verbose=0 show_fit=True show_loss=True mode='LSTM' #MLP,GRU

训练LSTM

train_fuc(mode='LSTM',batch_size=batch_size,epochs=epochs,hidden_dim=hidden_dim)

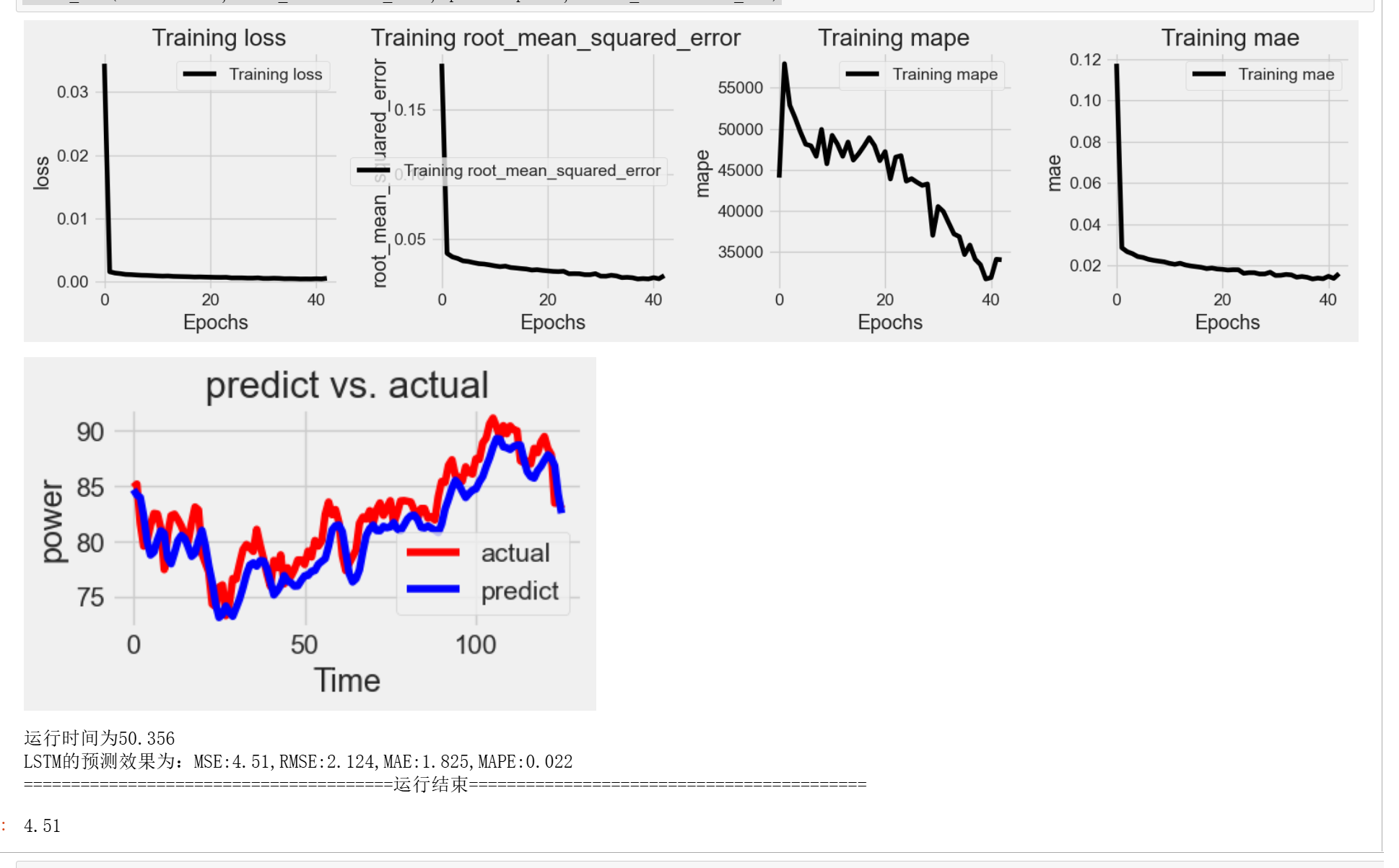

打印了训练过程中的一些指标的变化,还有预测的效果

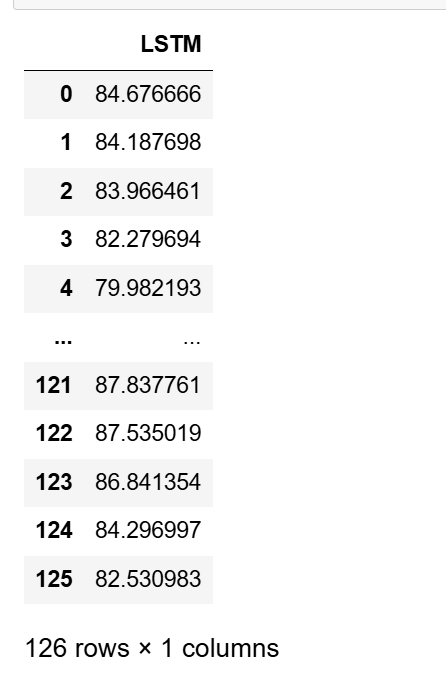

查看预测值

df_preds_all

下面进行eemd再去lstm 的预测,对比效果

EEMD-LSTM预测

自定义函数,可以进行模态分解可视化

from PyEMD import EMD, EEMD, CEEMDAN,Visualisation

def plot_imfs(data, method='EEMD',max_imf=4): # 提取信号 S = data[:,0] t = np.arange(len(S))

# 根据选择的方法初始化分解算法

if method == 'EMD':

decomposer = EMD()

imfs = decomposer.emd(S,max_imf=max_imf)

elif method == 'EEMD':

decomposer = EEMD()

imfs = decomposer.eemd(S,max_imf=max_imf)

elif method == 'CEEMDAN':

decomposer = CEEMDAN()

decomposer.ceemdan(S,max_imf=max_imf)

imfs, res = decomposer.get_imfs_and_residue()

else:

raise ValueError("Unsupported method. Choose 'EMD', 'EEMD', or 'CEEMDAN'.")

# 指定不同的颜色 colors = ['r', 'g', 'b', 'c', 'm', 'y', 'k']

# 绘制原始数据和IMFs

plt.figure(figsize=(8,len(imfs)+1), dpi=128)

plt.subplot(len(imfs) + 1, 1, 1)

plt.plot(t, S, 'k',lw=1) # 使用黑色绘制原始数据

plt.ylabel('close')

# 绘制每个IMF

for n, imf in enumerate(imfs[::-1]):

plt.subplot(len(imfs) + 1, 1, n + 2)

plt.plot(t, imf, colors[n % len(colors)])

plt.ylabel(f'IMF {n+1}')

plt.tight_layout() plt.show()

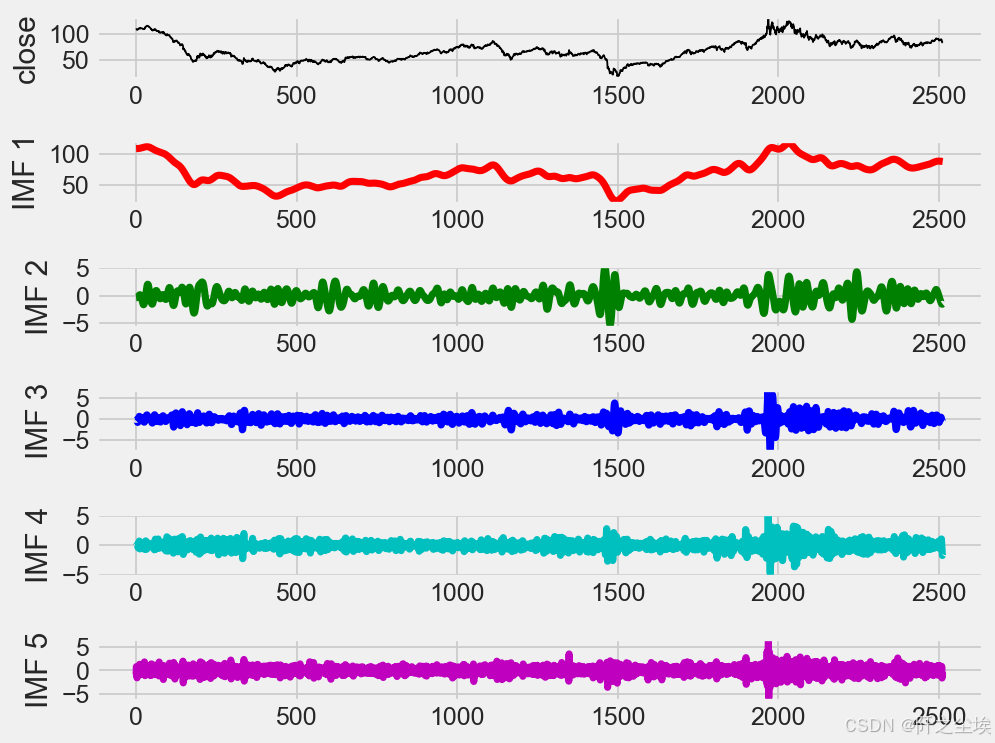

模态分解

plot_imfs(data0.to_numpy().reshape(-1,1))

取出分解数值

decomposer = EEMD() imfs = decomposer.eemd(data0.to_numpy(),max_imf=4) imfs.shape

df=pd.DataFrame()

for i in range(len(imfs)):

a = imfs[::-1][i,:]

dataframe = pd.DataFrame({'v{}'.format(i+1):a})

df['imf'+str(i+1)]=dataframe

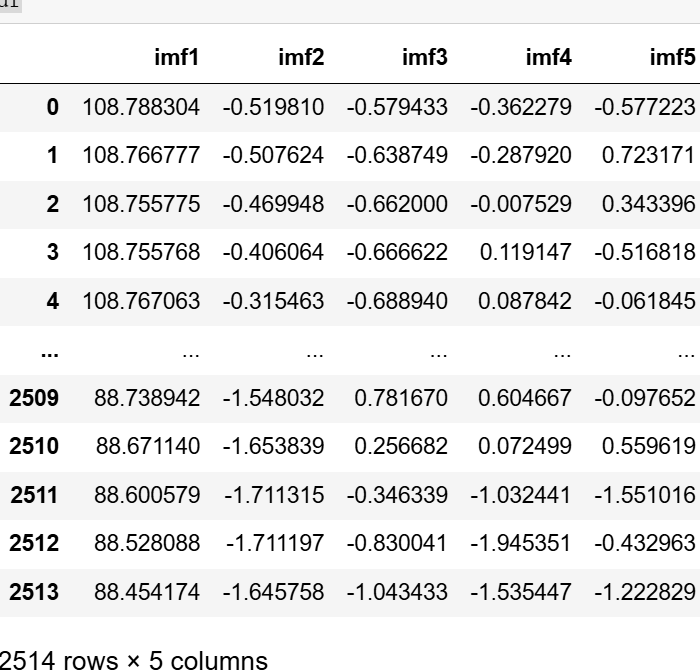

df

分解成了5条数据。

下面还是一样,构建一下函数,准备训练eemd-lstm

def build_sequences(text, window_size=24): #text:list of capacity x, y = [],[] for i in range(len(text) - window_size): sequence = text[i:i+window_size] target = text[i+window_size] x.append(sequence) y.append(target) return np.array(x), np.array(y) def get_traintest(data,train_size=len(df),window_size=24): train=data[:train_size] test=data[train_size-window_size:] X_train,y_train=build_sequences(train,window_size=window_size) X_test,y_test=build_sequences(test,window_size=window_size) return X_train,y_train,X_test,y_test

def evaluation(y_test, y_predict): mae = mean_absolute_error(y_test, y_predict) mse = mean_squared_error(y_test, y_predict) rmse = math.sqrt(mean_squared_error(y_test, y_predict)) mape=(abs(y_predict -y_test)/ y_test).mean() return mae, rmse, mape

画图函数

def build_model(X_train,mode='LSTM',hidden_dim=[32,16]): set_my_seed() if mode=='MLP': model = Sequential() model.add(Dense(hidden_dim[0],activation='relu',input_shape=(X_train.shape[-1],))) model.add(Dense(hidden_dim[1],activation='relu')) model.add(Dense(1)) elif mode=='EEMD-LSTM': model = Sequential() model.add(LSTM(hidden_dim[0],return_sequences=True, input_shape=(X_train.shape[-2],X_train.shape[-1]))) model.add(LSTM(hidden_dim[1])) model.add(Dense(1))

model.compile(optimizer='Adam', loss='mse',metrics=[tf.keras.metrics.RootMeanSquaredError(),"mape","mae"]) return model

def plot_loss(hist,imfname):

plt.subplots(1,4,figsize=(16,2))

for i,key in enumerate(hist.history.keys()):

n=int(str('14')+str(i+1))

plt.subplot(n)

plt.plot(hist.history[key], 'k', label=f'Training {key}')

plt.title(f'{imfname} Training {key}')

plt.xlabel('Epochs')

plt.ylabel(key)

plt.legend()

plt.tight_layout()

plt.show()

def evaluation_all(df_eval_all,mode,show_fit=True): df_eval_all['all_pred']=df_eval_all.iloc[:,1:].sum(axis=1)

MAE2,RMSE2,MAPE2=evaluation(df_eval_all['actual'],df_eval_all['all_pred'])

df_eval_all.rename(columns={'all_pred':'predict'},inplace=True)

if show_fit:

df_eval_all.loc[:,['predict','actual']].plot(figsize=(12,4),title=f'EEMD+{mode} predict')

print('总体预测效果:')

print(f'EEMD+{mode}的效果为mae:{MAE2}, rmse:{RMSE2} ,mape:{MAPE2}')

df_preds_all[mode]=df_eval_all['predict'].to_numpy()

训练函数

def train_fuc(mode='EEMD-LSTM',train_rat=0.8,window_size=24,batch_size=32,epochs=100,hidden_dim=[32,16],show_imf=True,show_loss=True,show_fit=True):

df_all=df.copy()

train_size=int(len(df_all)*train_rat)

df_eval_all=pd.DataFrame(data0.values[train_size:],columns=['actual'])

for i,name in enumerate(df_names):

print(f'正在训练第:{name}条分量')

data=df_all[name]

X_train,y_train,X_test,y_test=get_traintest(data.values,window_size=window_size,train_size=train_size)

#归一化

scaler = MinMaxScaler()

scaler = scaler.fit(X_train)

X_train = scaler.transform(X_train)

X_test = scaler.transform(X_test)

scaler_y = MinMaxScaler() scaler_y = scaler_y.fit(y_train.reshape(-1,1)) y_train = scaler_y.transform(y_train.reshape(-1,1))

if mode!='MLP':

X_train = X_train.reshape((X_train.shape[0], X_train.shape[1], 1))

X_test = X_test.reshape((X_test.shape[0], X_test.shape[1], 1))

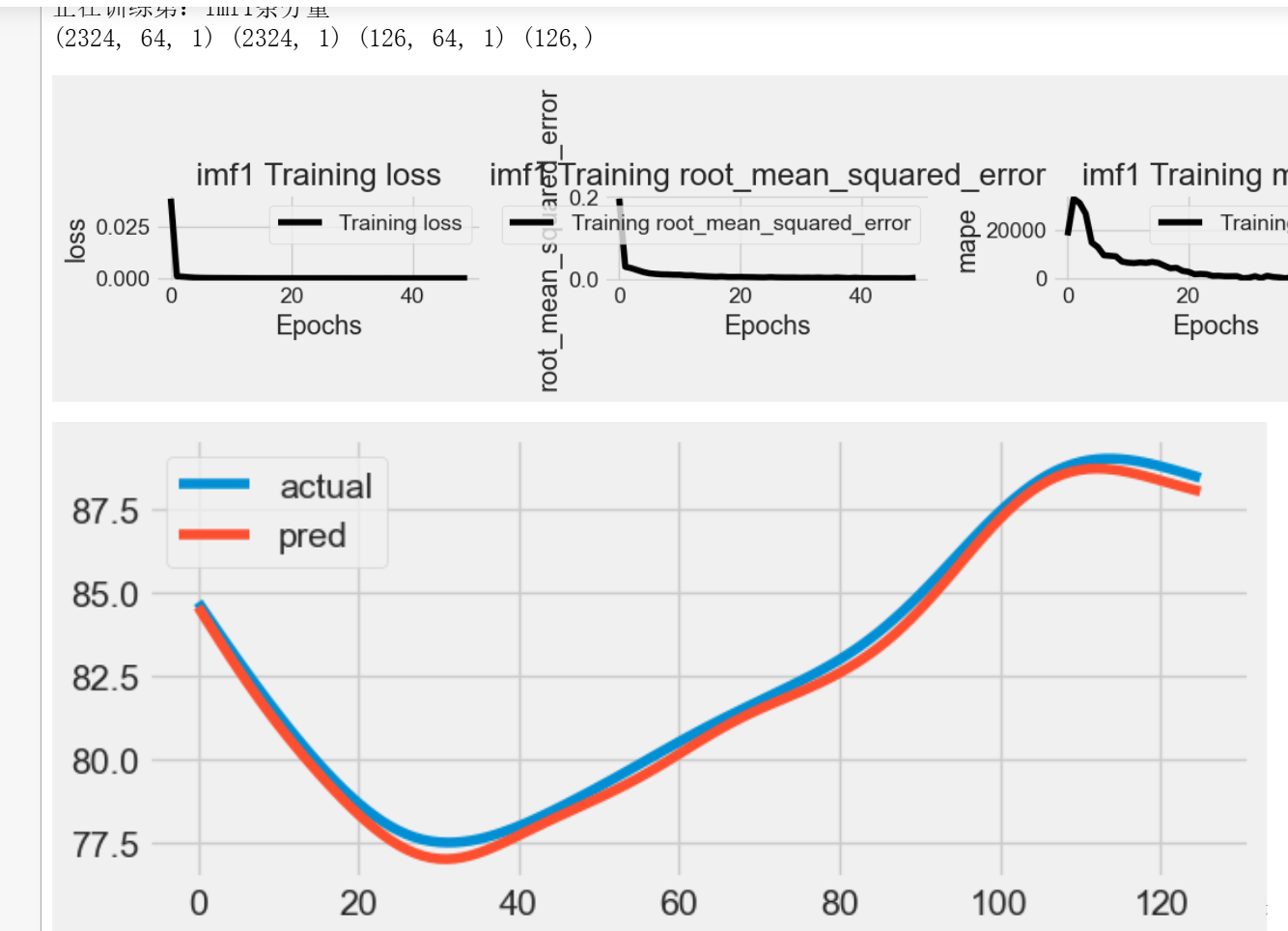

print(X_train.shape, y_train.shape, X_test.shape,y_test.shape)

set_my_seed()

model=build_model(X_train=X_train,mode=mode,hidden_dim=hidden_dim)

start = datetime.now()

hist=model.fit(X_train, y_train,batch_size=batch_size,epochs=epochs,verbose=0)

if show_loss:

plot_loss(hist,name)

#预测

y_pred = model.predict(X_test)

y_pred =scaler_y.inverse_transform(y_pred)

#print(y_pred.shape)

end = datetime.now()

if show_imf:

df_eval=pd.DataFrame()

df_eval['actual']=y_test

df_eval['pred']=y_pred

df_eval.plot(figsize=(7,3))

plt.show()

mae, rmse, mape=evaluation(y_test=y_test, y_predict=y_pred)

time=end-start

df_eval_all[name+'_pred']=y_pred

print(f'running time is {time}')

print(f'{name} 该条分量的效果:mae:{mae}, rmse:{rmse} ,mape:{mape}')

print('============================================================================================================================')

evaluation_all(df_eval_all,mode=mode,show_fit=True)

开始训练

train_rat=0.95 set_my_seed() train_fuc(mode='EEMD-LSTM',window_size=window_size,train_rat=train_rat,batch_size=batch_size,epochs=epochs,hidden_dim=hidden_dim)

它会展示每条分量的训练过程和结果,评价指标,很全的。图太长我就不放完了。

查看预测值

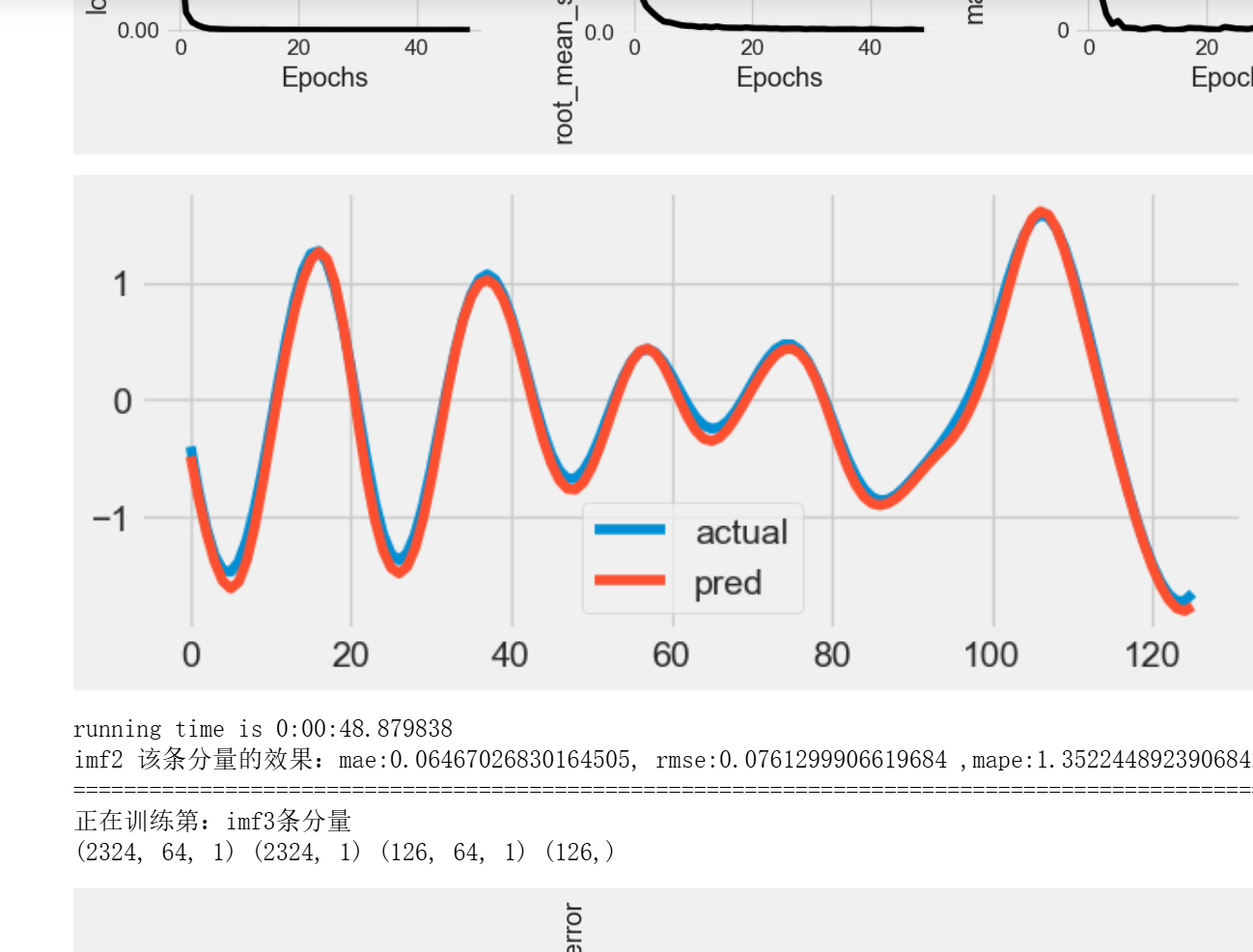

df_preds_all

两个模型的预测值都有的,现在开始计算评价指标对比。

评价指标

对于回归问题,评价指标一般都是MAE,MSE,RMSE,MAPE,R2等。我们也用这些计算

自定义评价指标函数

def evaluation2(y_test, y_predict): mae = mean_absolute_error(y_test, y_predict) mse = mean_squared_error(y_test, y_predict) rmse = np.sqrt(mean_squared_error(y_test, y_predict)) mape=(abs(y_predict -y_test)/ y_test).mean() r_2=r2_score(y_test, y_predict) return r_2, rmse, mae, mape #r_2 df_eval_all=pd.DataFrame(columns=['R_2','RMSE','MAE','MAPE'])

计算

y_actual=data0.iloc[-len(df_preds_all):]

for col in df_preds_all:

s=list(evaluation2(y_actual,df_preds_all[col].to_numpy()))

df_eval_all.loc[f'{col}',:]=s

df_eval_all

分析

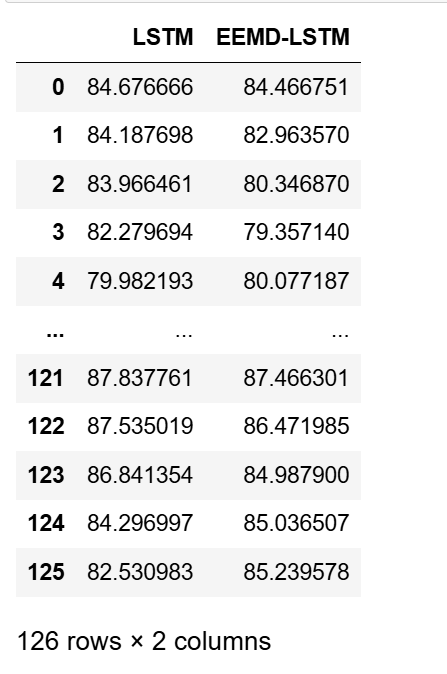

表格展示了两种不同的时间序列预测模型(LSTM和EEMD-LSTM)在四个指标(R^2、RMSE、MAE、MAPE)上的性能比较。让我们逐个指标进行分析:

R^2(确定系数):

LSTM:0.748385 , EEMD-LSTM:0.96088

通过R^2可以看出,EEMD-LSTM模型的拟合效果要比LSTM模型更好。R^2接近1表示模型能够很好地解释目标变量的变化,所以EEMD-LSTM在这方面表现更佳。

RMSE(均方根误差):

LSTM:2.123593 ,, EEMD-LSTM:0.837343

RMSE衡量了模型预测值与实际值之间的差异,因此数值越低表示模型的预测性能越好。在这个指标上,EEMD-LSTM模型的表现明显优于LSTM模型,其预测误差更小。

MAE(平均绝对误差):

LSTM:1.825414 , EEMD-LSTM:0.678292

MAE也是衡量预测误差的指标,与RMSE类似,数值越低表示模型的预测性能越好。EEMD-LSTM在这个指标上也表现得更好,其平均绝对误差更小。

MAPE(平均绝对百分比误差):

LSTM:0.022181 , EEMD-LSTM:0.008235

MAPE用于度量预测值相对于实际值的百分比误差,同样,数值越低表示模型的预测性能越好。EEMD-LSTM在这个指标上也明显优于LSTM模型。

综上所述,EEMD-LSTM模型在所有指标上都表现出了更好的性能,相比之下,它具有更高的拟合度、更低的预测误差和更小的百分比误差,这可能是由于其结合了EEMD(经验模态分解)方法,能够更有效地捕捉时间序列数据中的非线性和非平稳性特征。

可视化

bar_width = 0.4

colors=['c', 'tomato', 'b', 'g', 'm', 'y', 'lime', 'k','orange','pink','grey','tan','gold','r']

fig, ax = plt.subplots(2,2,figsize=(7,5),dpi=128)

for i,col in enumerate(df_eval_all.columns):

n=int(str('22')+str(i+1))

plt.subplot(n)

df_col=df_eval_all[col]

m =np.arange(len(df_col))

plt.bar(x=m,height=df_col.to_numpy(),width=bar_width,color=colors)

#plt.xlabel('Methods',fontsize=12)

names=df_col.index

plt.xticks(range(len(df_col)),names,fontsize=10)

plt.xticks(rotation=40)

plt.ylabel(col,fontsize=14)

plt.tight_layout()

#plt.savefig('柱状图.jpg',dpi=512)

plt.show()

反正怎么看都是eemd-lstm更好。

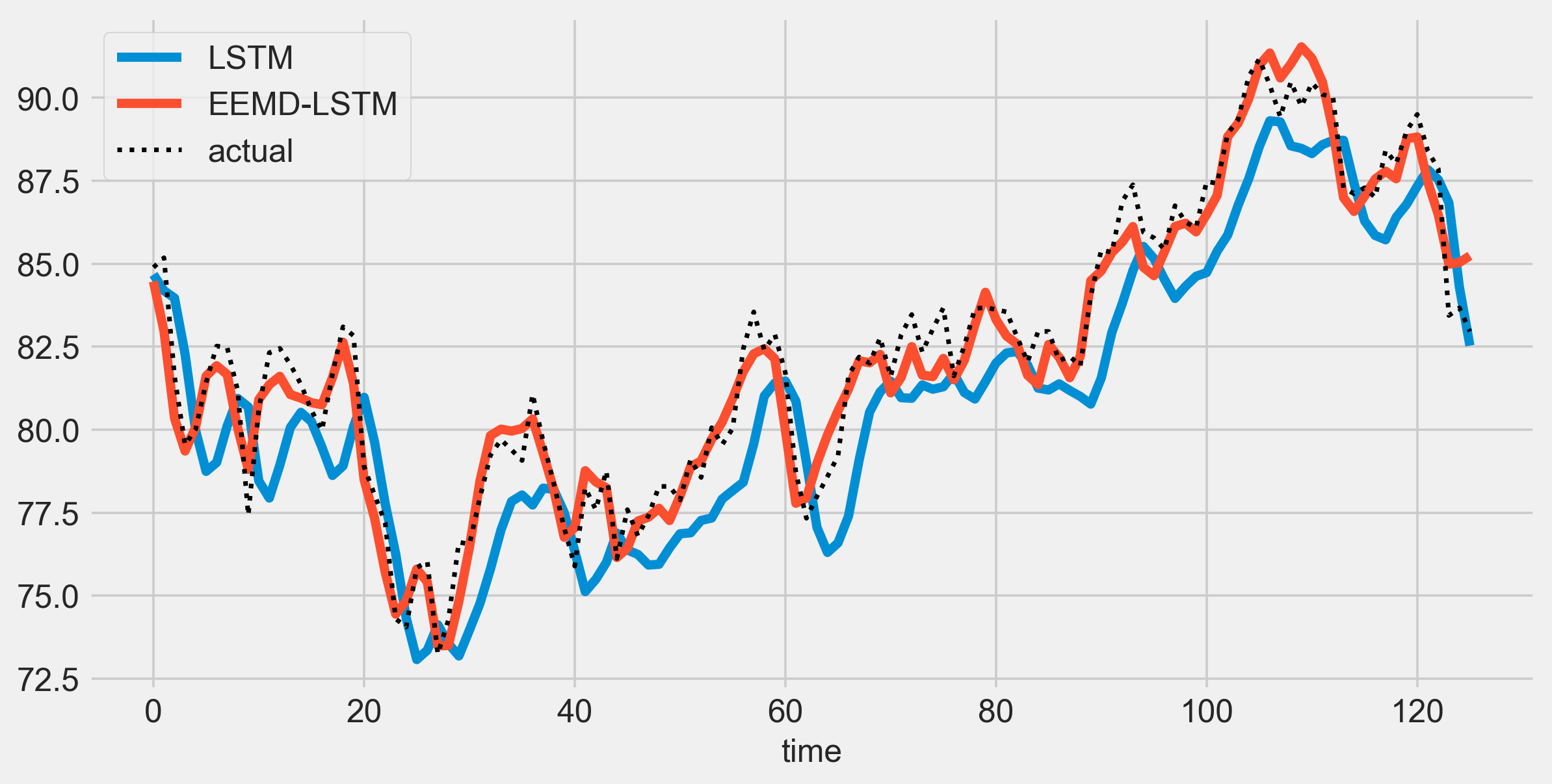

预测效果对比

直接和真实值一起画图对比就行

plt.figure(figsize=(10,5),dpi=256) for i,col in enumerate(df_preds_all.columns): plt.plot(df_preds_all[col],label=col) # ,color=colors[i]

plt.plot(y_actual.to_numpy(),label='actual',color='k',linestyle=':',lw=2)

plt.legend()

plt.ylabel('',fontsize=16)

plt.xlabel('time',fontsize=14)

#plt.savefig('点估计线对比.jpg',dpi=256)

plt.show()

经过eemd的数据预测效果更好啦,这就是模态分解的作用吧。

当然也可以用不同的模态分解方法,emd,ceemdan,vmd等,也可以尝试用不同的神经网络模型,我都是自定义函数,只需修改那一块函数就行了。

创作不易,看官觉得写得还不错的话点个关注和赞吧,我们会持续更新python数据分析领域的代码文章~(需要定制类似的代码可私信)